3D Bird Reconstruction

A Dataset, Model, and Shape Recovery from a Single View

|

|

|

|

Abstract

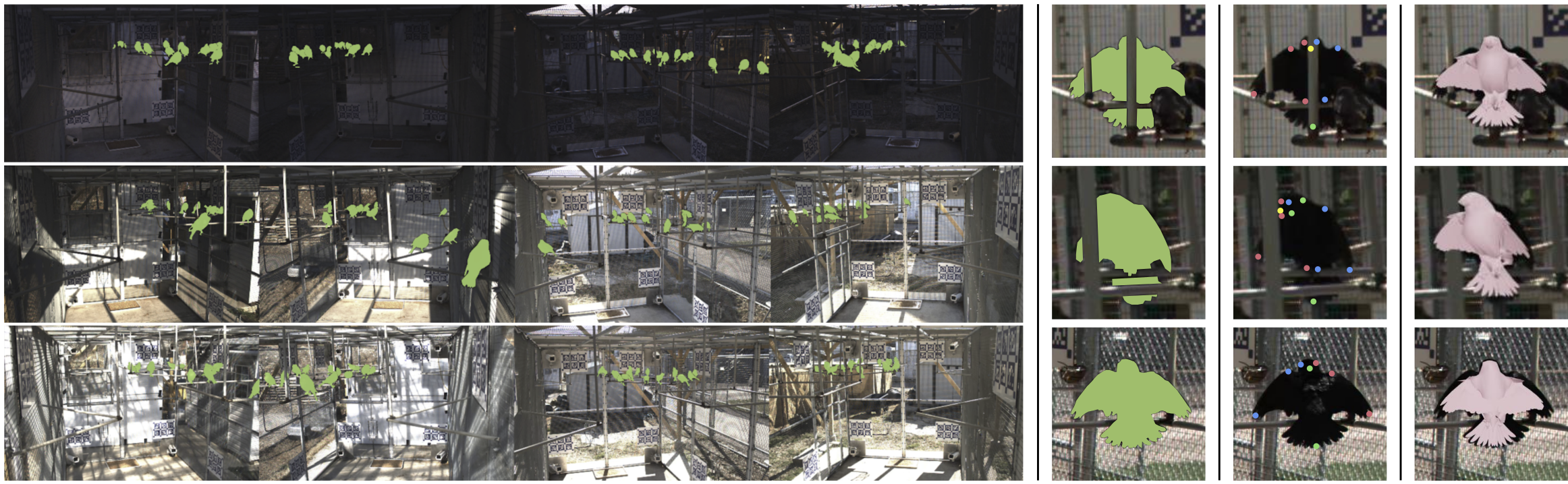

Automated capture of animal pose is transforming how we study neuroscience and social behavior. Movements carry important social cues, but current methods are not able to robustly estimate pose and shape of animals, particularly for social animals such as birds, which are often occluded by each other and objects in the environment. To address this problem, we first introduce a model and multi-view optimization approach, which we use to capture the unique shape and pose space displayed by live birds. We then introduce a pipeline and experiments for keypoint, mask, pose, and shape regression that recovers accurate avian postures from single views. Finally, we provide extensive multi-view keypoint and mask annotations collected from a group of 15 social birds housed together in an outdoor aviary.

Overview

Dataset

|

|

|

|

|

|

|

|

|

|

Results

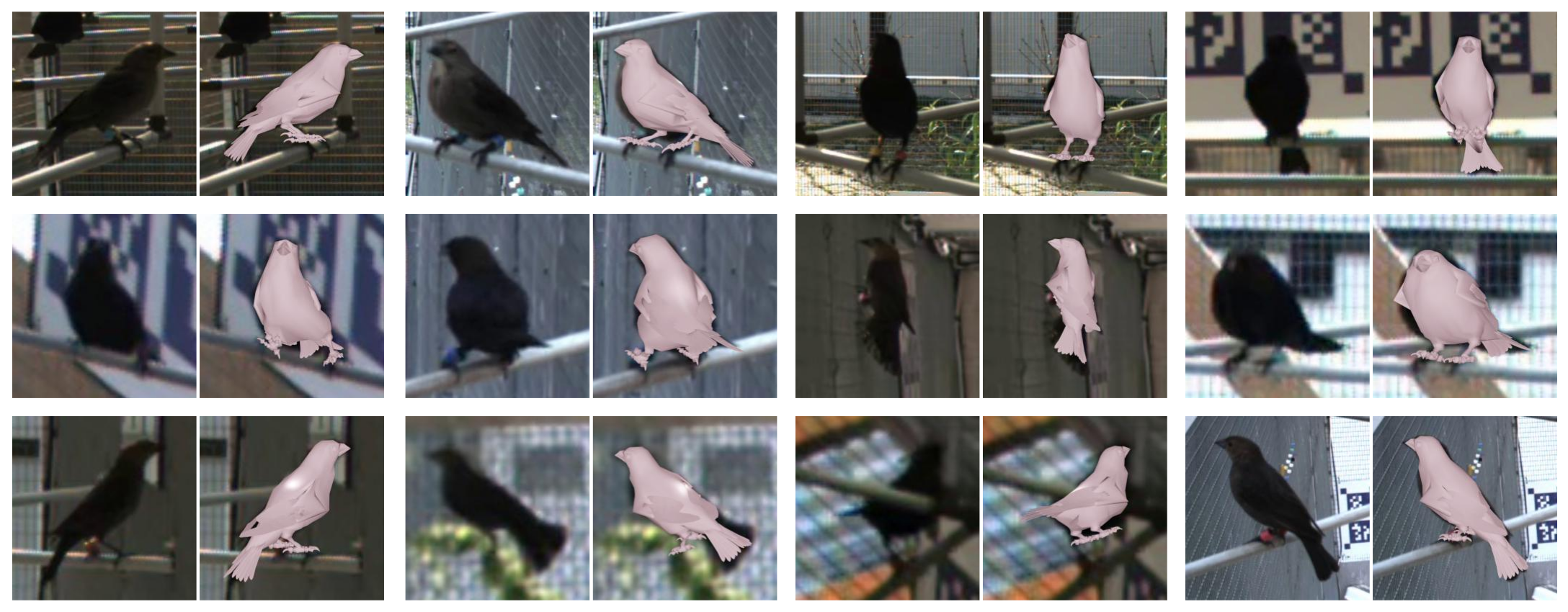

Our single-view pipeline (shown at the top) produces good qualitative fits for a variety of poses, including asymmetric, stretched, and puffed postures and for a variety of viewpoints including views from the front and back, the sides, and from below. Each panel shows the input image and the output mesh.

|

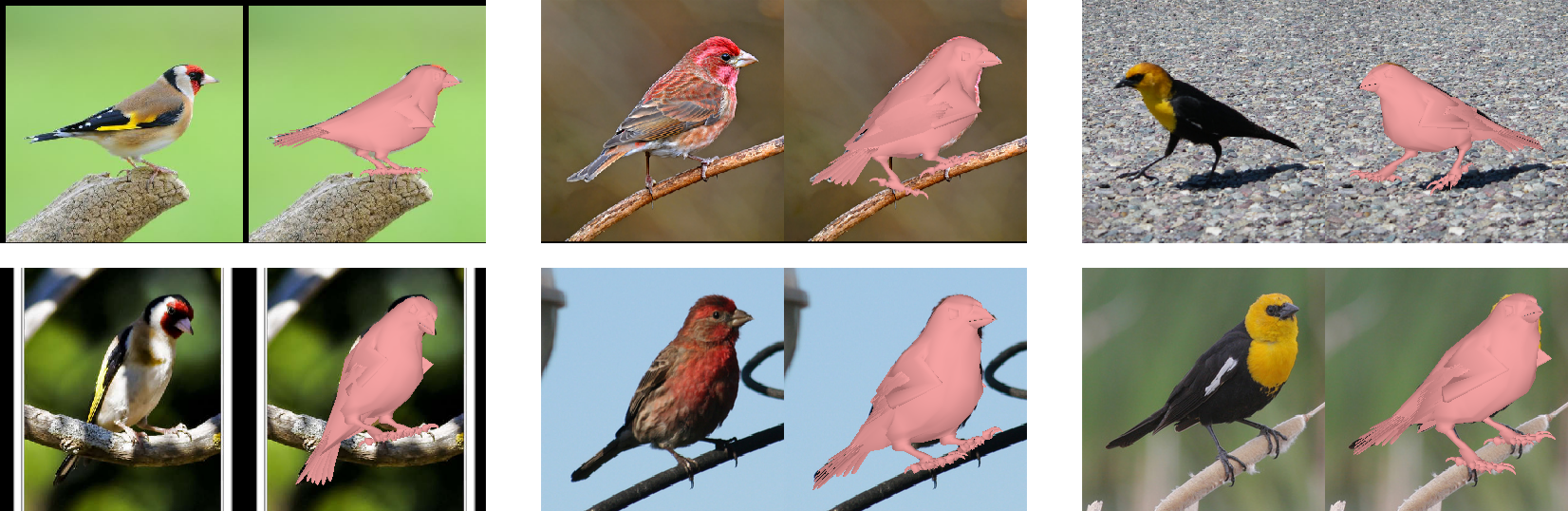

Our mesh, pose regression networks, and single-view optimization procedure generalize to similar bird species in CUB-200 using distributions of shape and pose extracted from our multi-view dataset.

|

|

Red-winged Blackbird

Painted Bunting

Rose-breasted Grosbeak

|

|

|

European Goldfinch

Purple Finch

Yellow-headed Blackbird

|

Video

Acknowledgements

We thank the diligent annotators in the Schmidt Lab, Kenneth Chaney for compute resources, and Stephen Phillips for helpful discussions. We gratefully acknowledge support through the following grants: NSF-IOS-1557499, NSF-IIS-1703319, NSF MRI 1626008, NSF TRIPODS 1934960.

The design of this project page was based on this website.